GenAI with Spring AI Framework

- Anand Nerurkar

- Sep 14, 2024

- 4 min read

Updated: Sep 15, 2024

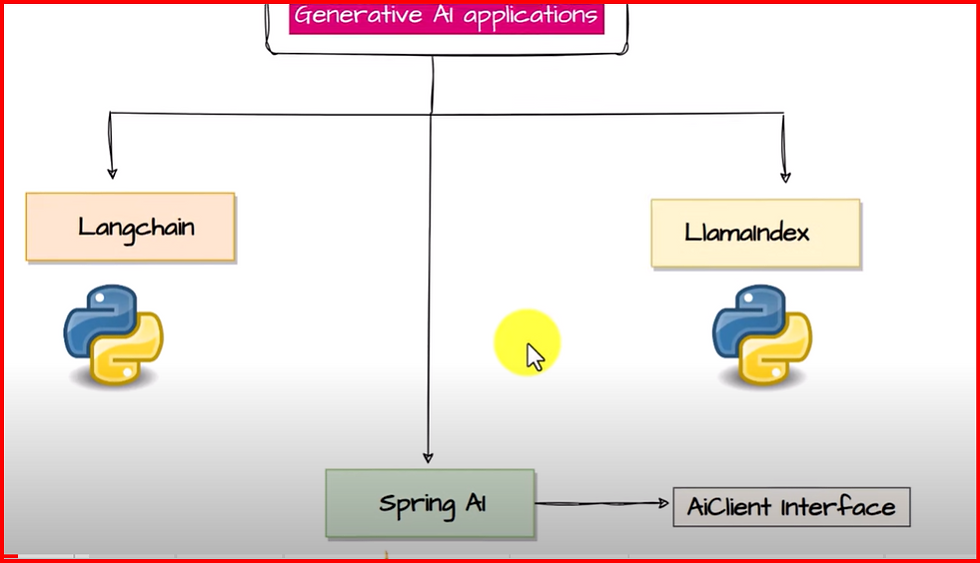

To develop GenAI application, we are having only python based framework like Langchain or Llamaindex. For Java developer, we can use SpringAI.

Spring AI is an application framework for AI engineering. Its goal is to apply to the AI domain Spring ecosystem design principles such as portability and modular design and promote using POJOs as the building blocks of an application to the AI domain.

Features

Portable API support across AI providers for Chat, text-to-image, and Embedding models. Both synchronous and stream API options are supported. Dropping down to access model-specific features is also supported.

Chat Models

Amazon Bedrock

Anthropic

Cohere's Command

AI21 Labs' Jurassic-2

Meta's LLama

Amazon's Titan

Anthropic Claude

Azure Open AI

Google Vertex AI

PaLM2

Gemini

Groq

HuggingFace - access thousands of models, including those from Meta such as Llama

MistralAI

MiniMax

Moonshot AI

Ollama - run AI models on your local machine

OpenAI

QianFan

ZhiPu AI

Text-to-image Models

OpenAI with DALL-E

StabilityAI

Transcription (audio to text) Models

OpenAI

Embedding Models

Azure OpenAI

Amazon Bedrock

Cohere

Titan

Azure OpenAI

Mistral AI

MiniMax

Ollama

(ONNX) Transformers

OpenAI

PostgresML

QianFan

VertexAI

Text

Multimodal

PaLM2

ZhiPu AI

The Vector Store API provides portability across different providers, featuring a novel SQL-like metadata filtering API that maintains portability.

Vector Databases

Azure AI Service

Apache Cassandra

Chroma

Elasticsearch

GemFire

Milvus

MongoDB Atlas

Neo4j

OpenSearch

Oracle

PGvector

Pinecone

Qdrant

Redis

SAP Hana

Typesense

Weaviate

Spring Boot Auto Configuration and Starters for AI Models and Vector Stores.

Function calling You can declare java.util.Function implementations to OpenAI models for use in their prompt responses. You can directly provide these functions as objects or refer to their names if registered as a @Bean within the application context. This feature minimizes unnecessary code and enables the AI model to ask for more information to fulfill its response.

Models supported are

OpenAI

Azure OpenAI

VertexAI

Mistral AI

Anthropic Claude

Groq

ETL framework for Data Engineering

The core functionality of our ETL framework is to facilitate the transfer of documents to model providers using a Vector Store. The ETL framework is based on Java functional programming concepts, helping you chain together multiple steps.

We support reading documents in various formats, including PDF, JSON, and more.

The framework allows for data manipulation to suit your needs. This often involves splitting documents to adhere to context window limitations and enhancing them with keywords for improved document retrieval effectiveness.

Finally, processed documents are stored in the Vector Database, making them accessible for future retrieval.

Extensive reference documentation, sample applications, and workshop/course material.

Future releases will build upon this foundation to provide access to additional AI Models, for example, the Gemini multi-modal model just released by Google, a framework for evaluating the effectiveness of your AI application, more convenience APIs, and features to help solve the “query/summarize my documents” use cases. Check GitHub for details on upcoming releases.

Depending on AI Model, we need to configure required AI library so that those AI model can be populated into pojo for our AI application.

Sample Application

===

we will create OpenAI model for our AI application. Fo that we need to include OpenAI library and build the project using spring starter.

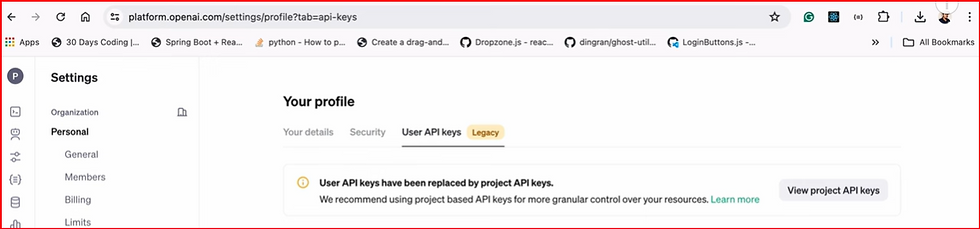

Depending on AI model, we need to register with that AI platform and get API keys, In our case we are using OpenAI platform and its API Keys for our application.

once above project is created , import into your IDE.

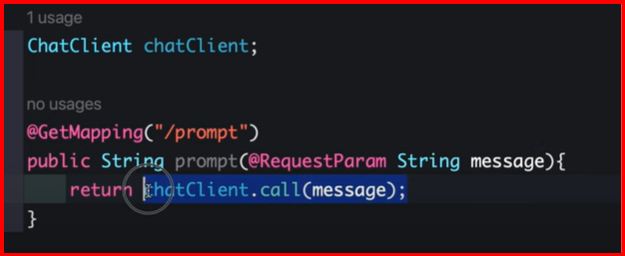

Let us create AIController

To call OpenAI chatgpt model API, we need to use chatclient library as below.

Depending on AI implmenation like Amazon, AzureAI, GeminiAI , that implenetation chatclient library added.

we are using OpenAI Chat Implementation, OpenAIChatClient library is being added.

configure application.yml

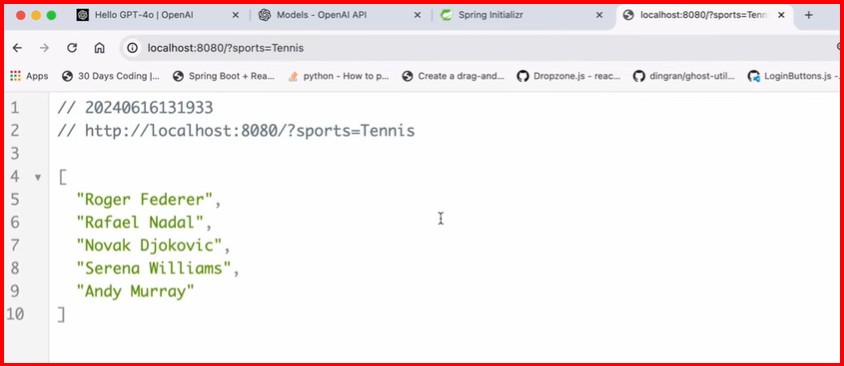

start server and hit api as below and see output

Now we will make use of prompt and prompt template.

we are creating prompt message = list of 5 most popular personalities in {sports} - sports is dynamic value which we taje it from requestparam and then we create prompttemplate.

With prompttemplate we create a prompt.

test endpoint as below

will try with usermessage prompt s below

now to work only for sort category or any particular category, we need ot make use of system messages as below.

thus it only work for sport category only , not for sport=holleywood , fore this it gives requires messages as part of system messages propmt.

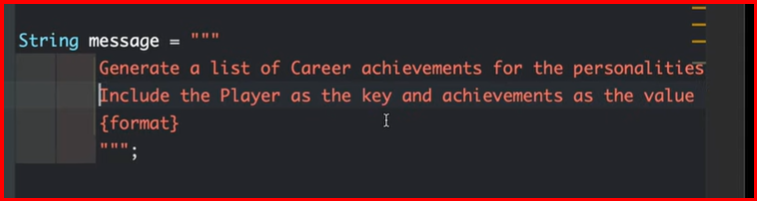

now we rea getting output data as string, now we will see how we can format data like json or any other format as below.

With chatgp4o model, we can only use prompttemplate.

to get the output in particular format , spring AI provides converter which convert data to list,map format.

above return List<String>

For BeanOutputConverter

==

need to change the prompt as below

RAG (Retrieval Augmented Generation )

===

Normally LLM data is trained for genric one and gives old/stale data upto june 21, when we want specific data , from specific source ,in specified context, then we make use of RAG.

For Ex: T20 world cup details , who won it , India won T20 world cup, this data is not available with LLM as it is not being trained or fed with current data.

so we can use RAG Model with the current data, pass on to LLM model with the context ,query and prompt so that LLM model return latest updated data. thus it enhances user trust.

IT is cost efficient as creating LLM model is expensive , so we can use existing LLM model + RAG Model with context to provide accurate , current latest data.

How to build RAG Model in application - this is where we provide source of truth= external data like file system or database or Vector Database.

hh

.png)

Comments