Generative AI Basic

- Anand Nerurkar

- Nov 12, 2023

- 2 min read

Updated: Jun 10, 2024

Statistics are the foundation of generative AI. These algorithms frequently employ methods Bayesian inference

Markov Chains

maximum likelihood estimation to create new data. On top of these statistical models, more intricate architectural designs are built.

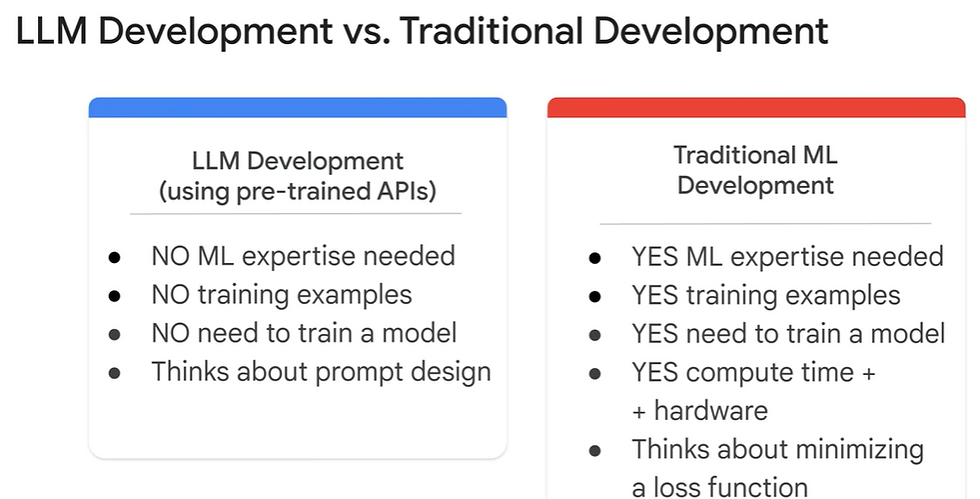

Large Language Model

is a subset of Deep Learning model, which can be pre-trained and fine tuned for a specific purpose.

LLM are trained to solve common problem like

Text Classification

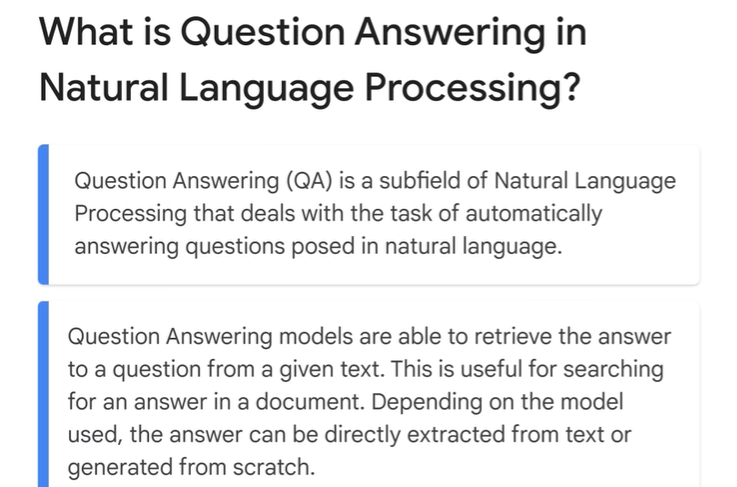

Question & Answer

Document Summarization

Text Generation

Google LLM Model

==

PathwaynLanguage Model(PaLM)

==

PaLM is a transformer model

LLM USecase--- Questions & Answers

For each question, desired response is obtained. This is due to prompt design.

Underlying Technologies in generative AI

Deep Learning and Neural Networks

Neural networks, specifically deep learning, are largely responsible for the capabilities of generative AI. Neural networks, which mimic the interconnected neuron structure of the human brain, enable complex decision-making.

CNNs, or convolutional neural networks

CNNs, primarily used for image-related tasks, have layers made especially for processing the grid-like topology of image data. When working with image data, they are frequently employed in the pre-processing stages of more sophisticated generative algorithms like GANs.

RNNs (Recurrent Neural Networks)

For sequential data, such as time-series data or natural language, RNNs are employed. They partially remember previous data because they feed their output into the input. This is especially helpful for tasks like audio and text generation.

GANs, or generative adversarial networks

GANs, comprise a Discriminator network that assesses the data and a Generator network that generates it. The generator produces high-quality data because the two networks are trained together in a game-like setting. These generative models, called variational autoencoders (VAEs), offer a probabilistic way to describe observations. For tasks requiring the generation of data with particular, controlled properties, VAEs are especially helpful.

-Deepfakes --- digitally forged images or videos -- and harmful cybersecurity attacks on businesses, including nefarious requests that realistically mimic an employee's boss.

Techniques such as generative adversarial networks(GANs)

GANs -- a type of machine learning algorithm -- that generative AI could create convincingly authentic images, videos and audio of real people.

Variational autoencoders (VAEs) -- neural networks with a decoder and encoder -- are suitable for generating realistic human faces, synthetic data for AI training or even facsimiles of particular humans.

Use Cases (Banking)

==

.png)

Comments